Creating a Collaborative Syllabus Using Moodle

- By Emmett Dulaney

- 03/24/08

##AUTHORSPLIT##<--->

A "collaborative syllabus" is one in which the students have the ability to help determine the specifics of a course--a novel concept in K-12 but one that is being applied in higher education. Those specifics can be any element that a instructor is willing to be flexible with (such items as the objectives, grading, attendance policies, types of assignments, and so on). The logic behind this tool is that by actively participating in the creation of the syllabus, students are able to signal what they want to learn and how they want to learn it and then (potentially) set the standard by which they will be accountable.

An instrument that has been successfully used before, the collaborative syllabus suffered in one crucial area: It required too much class time to create it. Being unfamiliar with the concept, students first had to have it explained to them in one class period. Following that, there would be several sessions where they would discuss their thoughts, vote on what to incorporate/exclude, and edit the existing document. Given the constraints of the typical 15-week semester, every session is dear, and it is difficult to lose one to such a process, let alone three or four.

In pursuit of a better approach that saved class time, we at Anderson University turned to Moodle for an experiment. The more input students could have in the process outside of class, the more class time could be saved for covering the material. Given that, the creation of the collaborative syllabus was then approached in a three-step process. This article details the steps taken, and the results of walking through this process.

Step 1: Assessment of Students

Traditionally, a large number of students at Anderson University who take the eBusiness course in the fall then take the eCommerce course in the spring (the course for which the collaborative syllabus is being created). With four weeks to go before the end of the fall semester, students in the eBusiness course were asked to access the ATTLS survey in Moodle and answer the questions asked as openly and honestly as possible.

The Attitudes Toward Thinking and Learning (ATTLS) survey was developed to identify "connected" and "separate" learning styles (Galotti, 1999). According to the authors of the survey instrument, "Separate knowing ... involves objective, analytical, detached evaluation of an argument or piece of work", while "Connected knowers look for why it makes sense, how it might be right"; they "... look at things from the other's point of view, in the other's own terms, and try first to understand the other's point of view rather than evaluate it." (Galotti)

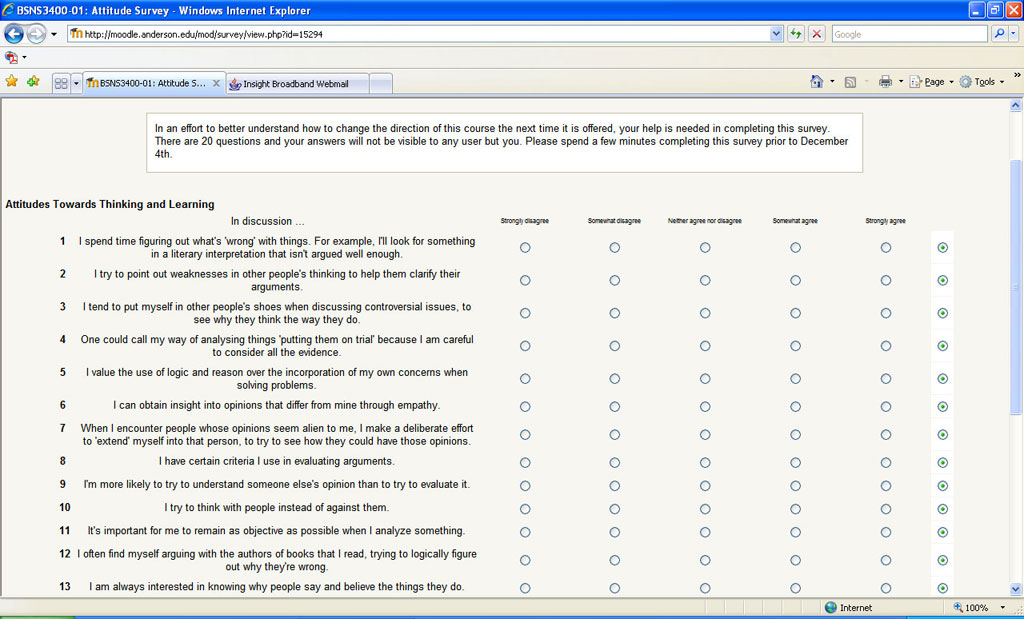

Moodle includes the ability to administer this instrument utilizing a 20-question, five-point Likert scale as shown below.

Verbally, the students were told that although Moodle records who accesses each element, that information would not be examined, and every survey would be considered anonymous. Within the survey, the information relayed to them prior to starting it was as follows:

In an effort to better understand how to change the direction of this course the next time it is offered, your help is needed in completing this survey. There are 20 questions and your answers will not be visible to any user but you. Please spend a few minutes completing this survey prior to December 4th.

The students were also verbally told that the purpose of the survey was to be the first step in the creation of the syllabus for the upcoming eCommerce course; this served as an additional incentive for those students signed up for that course to complete this. Of the 22 students enrolled in eBusiness, 12 completed the survey.

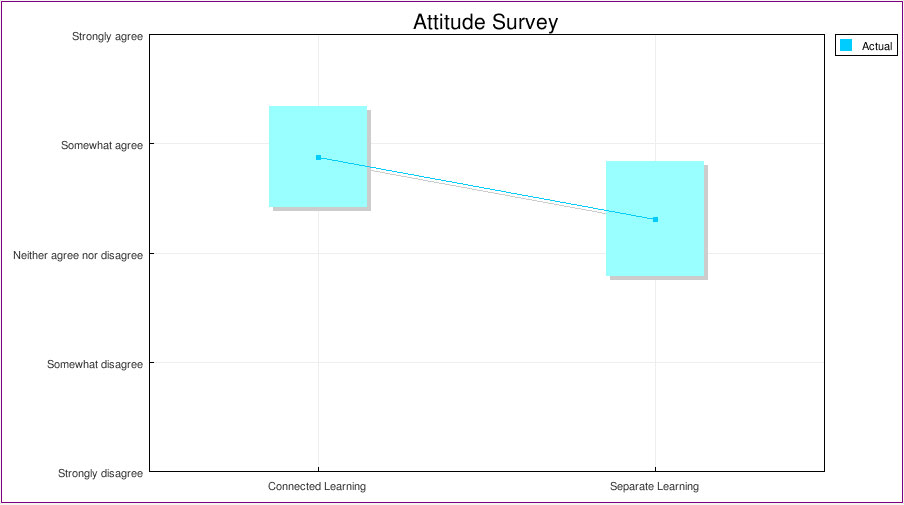

ATTLS maps the difference between connected learning and separate learning, and the following shows the summary of these based upon the twelve responses to the 20 questions.

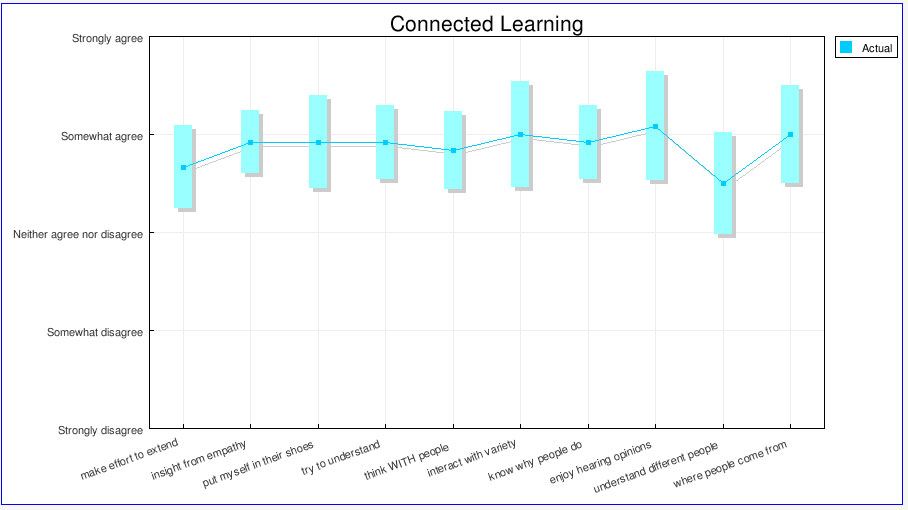

The actual list of questions, and mean/median/mode for each, are as follows:

| Question | Mean | Median | Mode |

| 1. When I encounter people whose opinions seem alien to me, I make a deliberate effort to 'extend' myself into that person, to try to see how they could have those opinions | 4 | 4 | 4 |

| 2. I can obtain insight into opinions that differ from mine through empathy. | 4 | 4 | 4 |

| 3. I tend to put myself in other people's shoes when discussing controversial issues, to see why they think the way they do. | 4 | 4 | 4 |

| 4. I'm more likely to try to understand someone else's opinion than to try to evaluate it. | 4 | 4 | 4 |

| 5. I try to think with people instead of against them. | 4 | 4 | 4 |

| 6. I feel that the best way for me to achieve my own identity is to interact with a variety of other people. | 4 | 4 | 5 |

| 7. I am always interested in knowing why people say and believe the things they do. | 4 | 4 | 4 |

| 8. I enjoy hearing the opinions of people who come from backgrounds different to mine - it helps me to understand how the same things can be seen in such different ways. | 4 | 4 | 5 |

| 9. The most important part of my education has been learning to understand people who are very different to me. | 3 | 4 | 4 |

| 10. I like to understand where other people are 'coming from', what experiences have led them to feel the way they do. | 4 | 4 | 4 |

| 11. I like playing devil's advocate - arguing the opposite of what someone is saying. | 3 | 3 | 3 |

| 12. It's important for me to remain as objective as possible when I analyze something. | 3 | 3 | 4 |

| 13. In evaluating what someone says, I focus on the quality of their argument, not on the person who's presenting it. | 3 | 4 | 4 |

| 14. I find that I can strengthen my own position through arguing with someone who disagrees with me. | 4 | 4 | 5 |

| 15. One could call my way of analyzing things 'putting them on trial' because I am careful to consider all the evidence. | 4 | 4 | 4 |

| 16. I often find myself arguing with the authors of books that I read, trying to logically figure out why they're wrong. | 3 | 2 | 2 |

| 17. I have certain criteria I use in evaluating arguments. | 3 | 3 | 3 |

| 18. I try to point out weaknesses in other people's thinking to help them clarify their arguments. | 3 | 4 | 4 |

| 19. I value the use of logic and reason over the incorporation of my own concerns when solving problems. | 3 | 3 | 3 |

| 20. I spend time figuring out what's 'wrong' with things. For example, I'll look for something in a literary interpretation that isn't argued well enough. | 3 | 3 | 4 |

It is possible to break down the results for each question associated with connected learning (CK) and arrive at the following scale:

Similarly, the scale results for separate learning (SK)--based upon the questions asked--are:

Step 2: Acquiring Input

Based upon the learning styles identified by the ATTLS survey, the students enrolled in the eCommerce course were then contacted individually (those currently in eBusiness and those not) and asked to provide specific input about what they hoped to gain from the upcoming course. This was done by means of an e-mail sent to all enrolled students asking them to log into Moodle and answer a few questions.

Moodle includes a great tool (known as an activity) for asking a single question with limited answers in Choice. Unfortunately, Choice was much too limited for this undertaking. Instead, a Quiz titled Pre-Course Survey was created. The disadvantage to using a Quiz for this endeavor was that it wants to give a grade, and this had to be explained in the e-mail the students received:

Greetings!

The reason you are receiving this e-mail is that you are currently enrolled in the eCommerce (BSNS 4400) course this coming spring semester. It is that time of year when I must start putting together a syllabus, and I would like to shake things up a bit from what has been done in the past (my babbling bores even me after a few years of the topic). Before making changes, though, I think the most important thing to do is find out what you are expecting and want - what would be most beneficial to you.

To order to simplify things, I've created a stub for the course in Moodle and added a quiz - it is not really a quiz but Moodle lacks a decent survey module. Between now and December 5th, I would like you to do the following:

1. Access Moodle

2. Pick this course - BSNS 4400 - from the list of available courses.

3. Pick the Pre-Course Survey (it is the only thing listed) and answer the five questions. Because I am using a quiz module to give a survey, you may get a message saying that you didn't get all the points, etc. Just ignore that. This is not being done for points - it is being done solely for your collaboration in helping create a more meaningful syllabus.

Your help and participation (before December 5th) is appreciated, and I look forward to seeing you in class in January.

Five questions were asked:

1. There can be as few as one exam for this course, or as many as four. IF there is one, it will be the final, and all exam points will be allotted to it. IF there are two exams, there will be a midterm and final and the exam points will be evenly allotted between the two of them. The same pattern will be follow for three or four exams. How many exams would you like the course to include?This was a single answer, multiple-choice, question with four choices (one, two, three, and four).

2. When it comes to grading, please prioritize the following in terms of the weight they should carry for the course:

This was done as a matching question. The choices were: Exam(s), Quizzes, Presentations, Projects (experiential), Participation, and Attendance. The possibilities for matching ranged from "most weight" to "least weight".

3. In terms of percentage of time spent on activities for the class, please rank the following:

This was also done as a matching question. The choices were: Lecture, Projects (group and individual), Media Presentations (video in class, etc.), Open class discussions, and Online activities instead of class. The possibilities for matching ranged from "More time should be devoted to this than any other activity" to "This should be the least most common activity".

4. What are three things that you want to learn as a result of taking this class?

This was given as a short answer question.

5. The grading scale is open, and a number of schools use different scales. Which of the following should constitute an "A" (we can then work backward from there to fill out the rest of the grading scale)?

This was a single answer, multiple-choice, question with four choices (95-100%, 93-100%, 90-100%, 88-100%).

After the students took the quiz, and it closed, the next step was to exam the Results, and particularly the Item Analysis. In this case, it showed the following results:

- By an overwhelming margin, students preferred three exams.

- The preferred priority was: Exams, Participation, Projects, Presentations, Quizzes, and Attendance.

- The results on the percentage question were too diverse to be meaningful. This left me with using the results from the second question as a proxy during this phase.

- Answers ranged from "I honestly have no idea what I want from this class" to specific issues involving Web site creation, moving from brick and mortar to eCommerce, and so on.

- By a slim margin, students preferred the 93-100% range for an "A", and this could be attributable to it being the standard scale used at this university.

Step 3: Fine Tuning

Given the student responses, the syllabus for the course was created. Before the fall semester ended, the syllabus for this spring course was posted online for the students to see. Not all that shockingly, many students chose not to access it before leaving for break.

The week that classes were scheduled to begin, the syllabus was sent as an attachment to the enrolled students with the following message:

Greetings!

Attached is the syllabus for the eCommerce course as it now stands. This syllabus was created using the input many of you provided in the survey (through Moodle) at the end of last semester. Please look it over and consider areas that you would like to tweak or fine tune. We will discuss - and finalize - this the first day of class.

Thank you,

At the first class session, there were several students who had signed up at the last minute and had not been able to be a part of the development. During that session, the syllabus was reviewed, and an overview of the process used to create it was given. The floor was opened to all students for suggestions, modifications, and other discussion. Surprisingly, all of the discussion was positive, and the students expressed appreciation for being allowed to have a say in the methodology. After only 20 minutes, the discussion ended with the syllabus being left as it was prior to the start of class.

The 20 minutes of class time proved much more bearable than several entire class sessions, and the experiment was deemed a success that will be repeated again in subsequent semesters.

Reference

Galotti, K.M. & Clinchy, B.M. (1999). A New Way of Assessing Ways of Knowing: The Attitudes Toward Thinking and Learning Survey (ATTLS). Sex Roles, 40 (9/10), 745-766.

Get daily news from THE Journal's RSS News Feed

About the author: Emmett Dulaney is an assistant professor at Anderson University and the author of several books on technology-related topics. He is a regular contributor to CertCities and can be reached at [email protected]. Proposals for articles and tips for news stories, as well as questions and comments about this publication, should be submitted to David Nagel, executive editor, at [email protected].

|